Google NapTime AI Vulnerability

Google's Naptime enhances LLM's ability to identify and analyze vulnerabilities in a manner that is both accurate and reproducible while ensuring optimal performance through its specialized toolset. This innovative framework represents an important step forward for AI-assisted vulnerability research, allowing security experts and practitioners to streamline their workflow and focus on the most critical aspects of their work—and maybe even take a well-deserved nap or two!

Since mid 2023 Google Researcher has been working on a framework for LLM assisted vulnerability research embodying these principles, with a particular focus on automating variant analysis. This project has been called "Naptime" because of the potential for allowing us to take regular naps while it helps us out with our jobs. Please don't tell our manager.

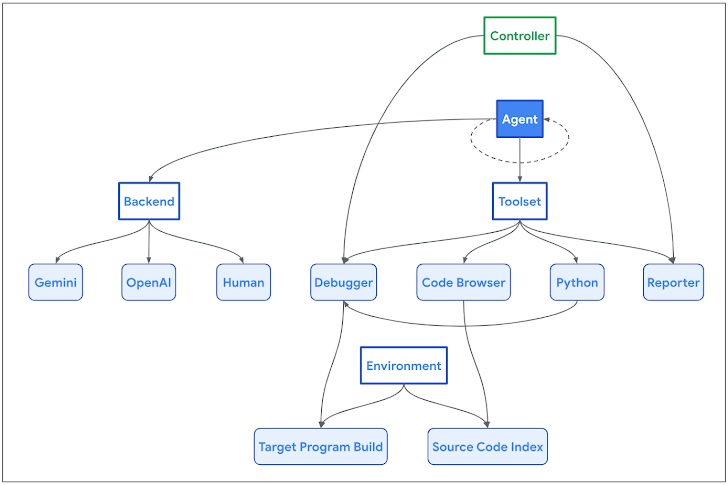

Naptime uses a specialised architecture to enhance an LLM's ability to perform vulnerability research. A key element of this architecture is grounding through tool use, equipping the LLM with task-specific tools to improve its capabilities and ensure verifiable results. This approach allows for automatic verification of the agent's output, a critical feature considering the autonomous nature of the system.

Naptime architecture.

The Naptime architecture is centred around the interaction between an AI agent and a target codebase. The agent is provided with a set of specialised tools designed to mimic the workflow of a human security researcher.

-

Code Browser tool enables the agent to navigate through the target codebase, much like how engineers use Chromium Code Search. It provides functions to view the source code of a specific entity (function, variable, etc.) and to identify locations where a function or entity is referenced. While this capability is excessive for simple benchmark tasks, it is designed to handle large, real-world codebases, facilitating exploration of semantically significant code segments in a manner that mirrors human processes.

-

Python tool enables the agent to run Python scripts in a sandboxed environment for intermediate calculations and to generate precise and complex inputs to the target program.

-

Debugger tool grants the agent the ability to interact with the program and observe its behaviour under different inputs. It supports setting breakpoints and evaluating expressions at those breakpoints, enabling dynamic analysis. This interaction helps refine the AI's understanding of the program based on runtime observations. To ensure consistent reproduction and easier detection of memory corruption issues, the program is compiled with AddressSanitizer, and the debugger captures various signals indicating security-related crashes.

-

Reporter tool provides a structured mechanism for the agent to communicate its progress. The agent can signal a successful completion of the task, triggering a request to the Controller to verify if the success condition (typically a program crash) is met. It also allows the agent to abort the task when unable to make further progress, preventing stagnation.

The system is model-agnostic and backend-agnostic, providing a self-contained vulnerability research environment. This environment is not limited to use by AI agents; human researchers can also leverage it, for example, to generate successful trajectories for model fine-tuning.

Google's Naptime enables an LLM to perform vulnerability research that closely mimics the iterative, hypothesis-driven approach of human security experts. This architecture not only enhances the agent's ability to identify and analyse vulnerabilities but also ensures that the results are accurate and reproducible.